AudienceMx - Article

A/B Testing Post Formats: Simple Experiments to Improve Engagement

Learn simple A/B testing methods for post formats to improve engagement with lightweight experiments on hooks, CTA placement, and length.

Intro

If you want to grow an audience and build a professional brand, small experiments deliver big returns. This guide shows how to run lightweight A/B tests on post formats so you can learn what makes your network interact, comment, and share. The focus is practical: test hooks, CTA placement, and post length without complex analytics tools. You will learn how to define clear hypotheses, run repeatable tests, and interpret results using basic metrics most professionals can access. These methods are ideal for content strategists, social media managers, marketers, and entrepreneurs who need fast answers and measurable improvements in their posting routine.

Using simple experiments fits teams and individuals who do not have time or resources for enterprise measurement. The goal is to improve the signal you get from each post and to translate those insights into a replicable content routine. Throughout this post you will see examples tailored to LinkedIn engagement strategies, practical templates you can paste into your next post, and a lightweight measurement plan you can run with the tools you already use. If you use AudienceMx to create and iterate posts, the workflows below will pair directly with features like enhanced hook creation and unlimited drafts, so you can scale tests without slowing down your calendar.

Why lightweight A/B tests matter for LinkedIn engagement strategies

Big data and advanced analytics are valuable, but they are not required to learn what resonates with your audience. Lightweight A/B tests make experimentation accessible. When you test a single variable at a time, you reduce noise and learn faster. For professionals focused on LinkedIn engagement strategies, the most useful experiments answer questions like which opening line prompts a comment, where to place a call to action to boost replies, and how length affects reach for your specific network. Learn more in our post on Long-Term Content Strategies That Survive Algorithm Changes.

Light experiments also lower the time cost of testing. Instead of building a complex tracking setup, you can post two variations across consecutive days or to different segments of your audience and compare engagement using simple metrics. That speed helps you iterate weekly rather than quarterly, so your content evolves with your audience, not with stale assumptions.

Finally, lightweight testing supports better content planning. When you collect small, repeatable wins, you can incorporate those patterns into your content calendar. This creates consistency in performance, which is a core goal of effective LinkedIn engagement strategies. Over time those incremental improvements compound into higher visibility and stronger professional credibility.

Designing experiments that answer one question at a time

Good tests isolate a single variable. When you change multiple elements in the same test, you risk a misleading result. Start by choosing one dimension you want to optimize: hook, CTA placement, or length. Each experiment needs a hypothesis, a control, and a variant. A clear hypothesis looks like this: "If I shorten the first line to a single sentence, comments will increase by 15 percent compared to my usual two-sentence hook." Learn more in our post on Turn One Idea into Five LinkedIn Posts: Repurposing Frameworks That Scale Your Voice.

Keep these principles in mind when designing tests:

- Test one variable only. If you change tone and CTA placement at the same time, you will not know which change caused the effect.

- Use a consistent audience window. Run variations during similar days and times to limit timing bias.

- Repeat tests. Run each variation at least three times to observe consistent trends rather than one-off spikes.

- Document your test. Record date, time, audience context, and exact copy so you can replicate the winning format.

Choosing a control matters. Your control should be a post format you use regularly. If your normal posts are 200 words with a reflective hook and a CTA at the end, use that as control and create a variant that changes only one attribute, such as moving the CTA earlier in the post. Keep the control stable across multiple tests so you can track change over time.

How to test hooks that capture attention

The opening line determines whether a reader scrolls or stops. For LinkedIn engagement strategies, the hook is a high-leverage element because it influences both impressions and interactions. Design hook experiments around clear alternatives: curiosity-driven, value-driven, and emotion-driven hooks. Each has a different psychological appeal. Learn more in our post on Personalize AI Writing: Template Library to Capture Your Unique Professional Voice.

Curiosity-driven hooks hint at an insight without fully revealing it. Example: "Most founders miss this simple rule that doubles follow-up responses." Value-driven hooks tell the reader what they will gain. Example: "Three tactics to shorten your sales cycle by 30 percent." Emotion-driven hooks connect through vulnerability or controversy. Example: "I failed at product-market fit for six months. Here is what saved us." Test these against each other by keeping the rest of the post constant.

Testing procedure for hooks:

- Choose a single post idea you will use consistently across variations.

- Create three versions of the opening line: curiosity, value, emotion.

- Publish each version on separate days within the same week and time window.

- Measure reach, reactions, comments, and click rate on any link or article you include.

- Repeat the cycle three times to validate patterns.

Interpretation tips: if curiosity hooks drive more clicks but value hooks drive deeper comments, you might choose curiosity to feed top-of-funnel channels and value to build relationships with key followers. Use AudienceMx tools to generate multiple hook options quickly, then apply this testing framework to validate which hook style aligns with your objectives.

Testing CTA placement for better responses

Call to action placement affects behavior more than many realize. A CTA near the top can capture attention when attention spans are short. A CTA at the end invites reflection after the reader consumes the full idea. Testing placement helps determine which position asks work best for your audience and objectives. For LinkedIn engagement strategies, CTAs can be as simple as "Comment your experience" or "Message me to learn more".

Common CTA placement options to test:

- First line CTA - ask early for a quick reaction.

- Middle CTA - place the ask after a short value point.

- End CTA - reflect and then ask.

Experiment plan:

- Pick a CTA that matches your goal - comments, clicks, or messages.

- Write three identical posts with the only change being CTA placement.

- Rotate posting times to control for audience activity.

- Measure direct responses, not just total reactions. Track comments and messages separately.

- Aggregate results and repeat the test over a few weeks to smooth daily variances.

Example interpretation: if the first-line CTA increases quick comments but decreases shares, you might use an early CTA for posts that aim to seed conversations and an end CTA for content intended to be shared widely. With AudienceMx you can create CTA templates, test them rapidly, and store winning CTAs in a content library to maintain consistency across campaigns.

Testing post length: short, medium, or long

Length influences attention, perceived effort, and algorithmic distribution. Short posts are scannable and often generate quick reactions. Medium posts give room for a compact story or tip. Long posts allow nuanced arguments or case studies that attract saves and deeper comments. For LinkedIn engagement strategies, the best length depends on your goal and your audience's behavior.

Design length experiments like this:

- Create one long version (400-800 words), one medium version (150-300 words), and one short version (40-80 words) of the same core idea.

- Ensure each version starts with the same or equivalent hook so the comparison isolates length.

- Run the three variations across similar posting windows and measure reach, reactions, comments, and saves.

- Pay attention to audience retention clues, such as whether long posts generate more thoughtful replies or short posts get quicker reactions.

Length decisions often relate to your objective. If you are building thought leadership and want high-quality comments, long posts that include data or concrete stories may perform better. If your objective is brand visibility and frequent posting, short posts or a series of micro-posts may be more efficient. The best approach is to build a content mix based on test outcomes rather than a single rule.

How to measure results without advanced analytics

Advanced analytics are helpful but not necessary for meaningful insights. Use simple, accessible metrics available in most professional feeds: impressions or reach, reactions, comments, shares, and direct messages. The growth of followers over time is also a useful trailing indicator. Track absolute numbers and rates to account for differences in audience size and posting time.

Key metrics to track for LinkedIn engagement strategies:

- Reaction rate - reactions divided by impressions or reach where available.

- Comment rate - comments per post. This is a strong indicator of conversation quality.

- Share rate - how often your idea is redistributed by your audience.

- Message count - number of direct messages in response to the post.

- Follower growth after a set of posts - helpful to link format changes to long-term momentum.

Simple tracking sheet columns:

- Date

- Post title / short description

- Format tested - hook type, CTA placement, length

- Impressions or reach

- Reactions

- Comments

- Shares

- Messages

- Outcome summary and next step

Tip for small teams: use a shared spreadsheet to record outcomes after each post. If you use AudienceMx, export drafts and tag them with the test variable to quickly generate reports of winning formats. Over time you will compile a bank of proven formats that map to specific objectives like lead generation or community building.

Interpreting results and avoiding common errors

When evaluating test results watch for noise. A high-performing post may have benefited from a cofounder resharing it, a trending topic alignment, or a spike in network activity. To reduce false positives, run each variation multiple times and look for consistent improvement across repetitions. Statistical significance is useful but not required for practical decisions when you have repeated patterns.

Common mistakes to avoid:

- Changing multiple variables at once. This creates ambiguity.

- Testing during inconsistent windows. Audience activity shifts by day and time.

- Drawing conclusions from single posts. One viral post does not necessarily indicate a pattern.

- Not documenting context. Without context you cannot replicate or understand external influences.

Example of a cautious interpretation: if moving the CTA to the first line produced a 30 percent increase in comments in two out of three tests, consider that a winning pattern. If the effect appeared once and then disappeared, continue testing with variations of the winning CTA to confirm stability. Use AudienceMx to save iterations that perform well and to automate repeated posting of the winning format when appropriate.

Practical templates and scripts to run quick A/B tests

Templates speed up experiment setup and ensure you focus on one variable at a time. Below are ready-to-use templates for hooks, CTA placements, and lengths you can paste into your editor and adapt. Each template includes a control and a variant so you can run a quick A/B test.

Hook templates

- Control (value): "Three quick wins for improving meeting outcomes." Body: keep original material.

- Variant A (curiosity): "Most teams overlook one fix that changes every meeting." Body: same as control.

- Variant B (emotion): "I used to dread weekly meetings. These three changes made them productive." Body: same as control.

CTA placement templates

- Control (end CTA): "If this helps, share your experience in the comments." Place at end.

- Variant A (early CTA): "Comment if you try any of these today." Place in first line, then value points.

- Variant B (mid CTA): After two points, insert "Would you try this? Comment below." Then continue.

Length templates

- Short version: One-sentence hook, one-sentence takeaway, one-sentence CTA.

- Medium version: Hook, three concise bullets or short paragraphs, CTA.

- Long version: Hook, narrative or case study with data or steps, reflective question or CTA inviting deeper conversation.

Use these templates to create three posts on the same topic in the same week. Track the outcomes, then adjust. The templates are intentionally simple so you can iterate fast without over-optimizing early.

Examples of real experiments and what they revealed

Example 1 - Hook test

A content strategist tested a curiosity hook versus a value hook across six posts. The curiosity hook got 20 percent more clicks to the article, while the value hook generated 35 percent more meaningful comments. The insight: curiosity is better for driving click-throughs, value is better for community engagement. The strategist used curiosity hooks for article promotion and value hooks for relationship-building posts. This aligns with typical LinkedIn engagement strategies that separate reach objectives from conversation objectives.

Example 2 - CTA placement

An entrepreneur ran three identical posts with CTAs in the first line, the middle, and the end. The first-line CTA increased direct messages by 40 percent but reduced the share rate. The end CTA increased shares but produced fewer messages. The entrepreneur created a mixed plan: early CTAs on posts meant to generate leads and end CTAs on posts meant to spread brand ideas.

Example 3 - Length experiment

A consultant tested short, medium, and long versions of the same case study over cycles of three weeks. Long posts brought more saves and longer comments, while short posts got quick reactions and slightly higher impressions. The consultant allocated long posts to Mondays for thought leadership and short posts for rapid updates midweek. These small changes improved overall engagement quality without adding significant production time.

Addressing objections and practical constraints

Objection: "I do not have time for repeated tests." Solution: Treat each post as a micro-test. Use templates and automation to reduce time. Two or three mini-tests per week will compound into strong data within a quarter.

Objection: "My network is too small to get meaningful results." Solution: Small audiences still reveal directional insights. Focus on rates like comments per post and message rate rather than raw counts. Repeat tests to build confidence and combine findings across similar posts.

Objection: "I get too many external spikes and noise." Solution: Document context; when a post coincides with an external event, mark it and exclude it from the core sample. Repeat the test in a different week to verify results.

Objection: "I cannot measure impressions reliably." Solution: Use reactions and comments as proxy engagement metrics. Compare relative increases rather than absolute numbers. If possible, use follower growth and message count as additional indicators.

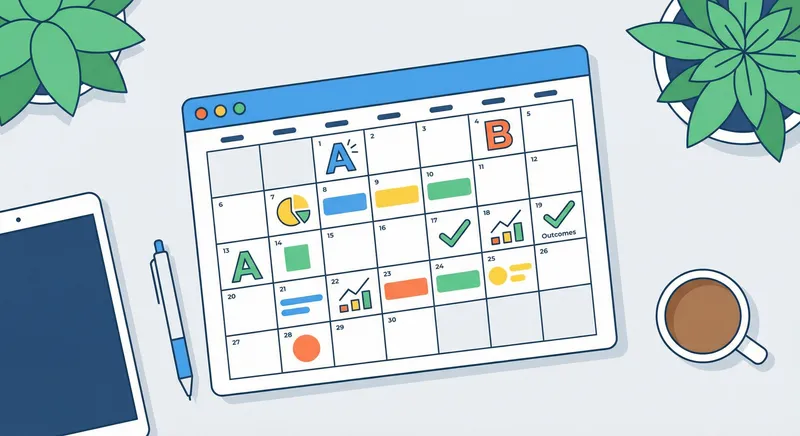

Scaling winners into a content plan

Once you identify winning formats, scale them methodically. Create a content calendar that alternates formats based on objective. For example, reserve two days per week for short, high-impression formats, one day for long thought leadership, and one day for community-oriented posts with early CTAs. This cadence balances reach and relationship building and aligns with common LinkedIn engagement strategies that require both visibility and meaningful interaction.

Operational steps to scale winners:

- Store winning templates in a shared library.

- Use AudienceMx features like content ideas generator and personalized post generation to expand winning formats quickly.

- Assign owners for content themes to maintain voice consistency.

- Schedule recurring tests to ensure formats stay relevant as your audience evolves.

By systematizing winners you avoid the trap of one-off success. The goal is consistent improvement, not a single viral moment. Use automation where it saves time, but keep the experiment loop active so you can adapt to new audience signals and trends.

Checklist: a repeatable process for A/B testing post formats

Use this checklist before you publish any test variation:

- Define hypothesis and metric to measure.

- Choose a single variable to change.

- Write control and variant posts using templates.

- Schedule posts in similar time windows and days.

- Record outcomes in a shared tracking sheet.

- Repeat each variation at least three times.

- Analyze trends and decide whether to scale or re-test.

When this checklist becomes routine, experimentation fits into your existing process rather than becoming additional work. AudienceMx can accelerate steps like drafting and storing winning formats so your team moves from insight to execution faster.

Practical CTA and next steps

If you are ready to run your first round of tests, start with one hook experiment and one CTA placement experiment this week. Use the templates above, track results in a simple spreadsheet, and repeat each variation three times. If you use AudienceMx, generate five hook variants in seconds, draft both control and variant posts, and schedule them across the week to collect data fast.

Adopt a weekly experiment rhythm: plan on Monday, draft Tuesday, publish Wednesday to Friday, and analyze over the weekend. That cycle keeps learning frequent and actionable without overwhelming your calendar. Over a month you will have enough data to make confident format choices aligned to your goals, whether that is lead generation, community building, or thought leadership.

Conclusion

Lightweight A/B testing of post formats is a practical, low-cost way to improve your presence on professional networks and support broader LinkedIn engagement strategies. The methods in this guide show how to set a clear hypothesis, test hooks, CTA placement, and post length, and evaluate results using simple metrics. You do not need advanced analytics to learn what works. Instead, focus on clarity in your testing design, consistent documentation, and repeated runs to surface reliable patterns.

Start small and build a system. Use templates to reduce cognitive load on the days you write and schedule, and apply a checklist to keep tests clean and repeatable. Document context and repeat winning variations so that your content calendar begins to reflect proven formats rather than guesses. This steady approach creates compounding gains: higher-quality comments, better message conversion, and more effective use of your time. For professionals balancing client work, hiring, or product development, these incremental improvements matter more than chasing occasional viral posts.

Finally, pair experimentation with tools that speed drafting and iteration. If you are using an AI content tool, generate multiple hook options, create variant CTAs, and store winning formats to deploy at scale without losing voice coherence. The combination of disciplined testing and efficient tooling helps you maintain momentum. Over time you will not only discover the formats that perform best but also build a repeatable content engine that fits your workflow and amplifies your professional story.

Ready to run your first A/B tests on post formats? Use AudienceMx to draft multiple variants, store winning templates, and automate parts of your content calendar so you can focus on strategy and conversations that move your business forward. Small experiments plus consistent execution deliver meaningful improvements in visibility and engagement.